Benefits of load balancing NetApp StorageGRID

Load balancing NetApp StorageGRID provides:

- High Availability (HA): Load balancers monitor the health of individual Storage Nodes. If a node fails or becomes unreachable, the load balancer automatically stops routing new traffic to it. This removes single points of failure and ensures that object storage services remain continuously available to client applications, even if components fail. In multi-site deployments, Global Server Load Balancing (GSLB) can be used to direct client traffic to the nearest or best-performing data center, providing site resilience and automated failover in the event of a complete site outage.

- Performance: Load balancing ensures that client connections and the ingest/retrieval workload (S3 or Swift API requests) are efficiently and evenly distributed across all available Storage Nodes, reducing latency and improving performance. This distribution also prevents any single node from becoming overwhelmed (bottlenecking), which in turn maximizes speed and throughput for the entire system.

- Scalability: As data storage needs grow, more Storage Nodes can easily be added, with the load balancer automatically including them in the resource pool, allowing the system’s performance and capacity to scale linearly.

About NetApp StorageGRID

NetApp StorageGRID is a software-defined, object-based storage solution that supports industry-standard object APIs such as Amazon S3 and Swift. It allows you to build a single name space across many sites, with multiple service levels for metadata-driven object life-cycle policies. StorageGRID protects data via intelligent policy with options including replica, erasure coding and cloud tier. StorageGRID can be deployed as optimized hardware appliances, virtual machines, Docker containers or a combination of all three.

It has three distinct node types:

- Admin Nodes: Admin Nodes provide system administration services such as system configuration, monitoring, and logging. Each StorageGRID system includes one primary Admin Node. The primary Admin Node hosts the Configuration Management Node (CMN) service which manages system-wide configurations and grid task. For redundancy, a StorageGRID system can have additional, Non-primary Admin Nodes.

- Storage Nodes: Storage Nodes manage the storage of objects to disk. This object management (both object data and object metadata) includes the evaluation of objects against ILM rules to determine how an object’s data is stored over time and protected from loss.

- Gateway Nodes (Optional): Gateway Nodes provide a load balancing interface to the StorageGRID system through which applications can connect. The Gateway Nodes host the Connection Load Balancer (CLB) service which acts as a switchboard for connecting clients to the most efficient Local Distribution Router (LDR) service.

Why Loadbalancer.org for NetApp StorageGRID?

Loadbalancer’s intuitive Enterprise Application Delivery Controller (ADC) is also designed to save time and money with a clever, not complex, WebUI.

Easily configure, deploy, manage, and maintain our Enterprise load balancer, reducing complexity and the risk of human error. For a difference you can see in just minutes.

And with WAF and GSLB included straight out-of-the-box, there’s no hidden costs, so the prices you see on our website are fully transparent.

More on what’s possible with Loadbalancer.org.

How to load balance NetApp StorageGRID

StorageGRID now includes a purpose-built load balancer, and this tends to be a great choice for single-site deployments.

However, Loadbalancer.org appliances offer an optimized solution for load balancing StorageGRID deployments across multiple sites – as this uses Global Sever Load Balancing (GSLB), and our products have this functionality built-in by default, at no extra cost. The GSLB functionality coupled with DNS delegation enables each load balancer to act as a smart DNS name server for the subdomains (in this example admin.company.com and s3.company.com).

Each StorageGRID node is regularly health-checked by each load balancer and this information is used when providing the smart DNS response to inbound DNS queries.

Load balancing deployment concept

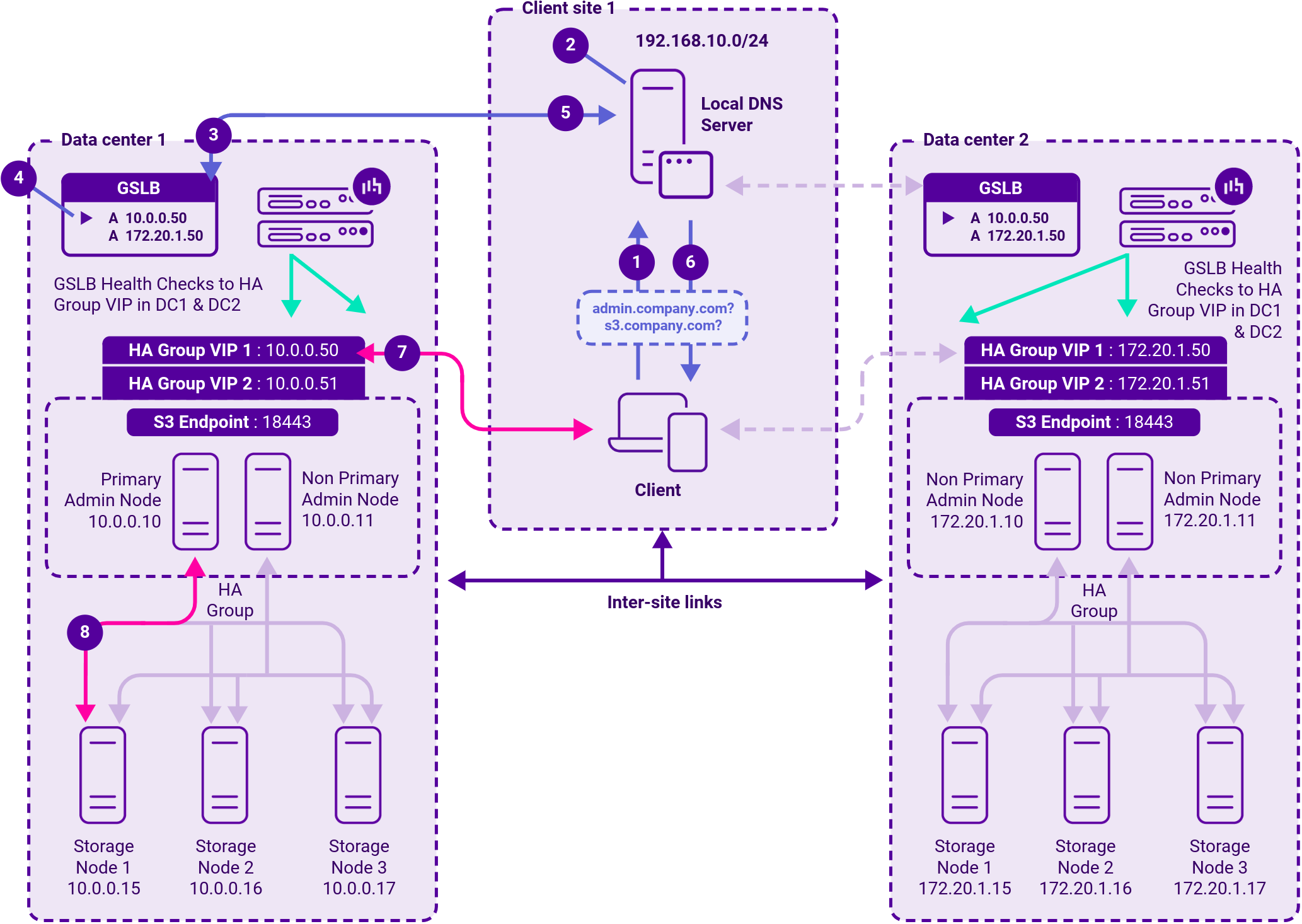

The scenario below shows a deployment over multiple sites where:

- Local server load balancing is delivered by the StorageGRID load balancer with endpoints configured on Admin Nodes and Gateway Nodes

- Inter-site load balancing delivered by the Loadbalancer.org appliance (using GSLB functionality)

How a multi-site deployment works

- The client sends a DNS query for either admin.company.com or s3.company.com to the local DNS server.

- The local DNS server has the subdomain delegated to all LB.org appliances (the LB.org appliances are configured as name servers for the subdomains).

- One of the LB.org appliances receives the delegated DNS query.

- If the query is for s3.company.com the LB.org appliance selects HA Group VIP 2 (10.0.0.51) in DC 1 based on the GSLB topology configuration and GSLB health checks.

- The LB.org appliance returns the IP address to the DNS server.

- The DNS server returns the IP address to the client.

- The client connects to 10.0.0.51 on port 18443 for the S3 client connection.

- The active Admin Node (10.0.0.10) then load balances the connection to Storage Node 1 (10.0.0.15) based on the StorageGRID load balancing algorithm.

For much more detail on single and multi-site deployment solutions with NetApp StorageGRID, please download our deployment guide below.